Audience-centered product development: establishing a digital product development framework at Te Papa

Adrian Kingston, Museum of New Zealand Te Papa Tongarewa, New Zealand

Abstract

As we all know, ideas for digital products can come from all parts of an organization and can be all shapes and sizes. How do we make sure we are actually working on the most relevant products for our audiences and using finite resources as best as we can? In late 2015, in the lead up to a five-year program of museum renewal, Te Papa established a new digital strategy, traversing audience-centered digital product development, transformation, and digital capability-building. As part of this strategy Te Papa developed and implemented a new Digital Product Development Framework (DPDF). The DPDF was a new set of digital processes grounded in Lean and Agile methodologies to help teams identify, prioritize, and validate ideas; to manage resources, deliver new product, and continuously improve existing Te Papa’s digital products and platforms. It consists of a set of clearly defined steps, using product development and managed product lifecycle practices common in digital-first businesses—and includes a starter pack of the best and most relevant tools we could find from Lean, Agile, and Design Thinking. The framework guides the product team through the development of potential product ideas from ideation, through prototyping, procurement, agile development, launch, and ongoing care and continuous improvement. It also allows for the wider organization to see all the work happening at any time, prioritize, and constantly manage risk. The DPDF itself was developed in a lean, collaborative, and iterative fashion. This presentation will discuss the development of the DPDF; the tools that form the main components; the roll out, training, and reception; and of course, the difficulties with actual implementation.Keywords: Product management, agile, lean, design thinking, digital transormation

Background

2015 was a year of significant change for digital development at Te Papa. A new Chief Executive saw digital development as a vital way to increase reach, enhance visitor experience, and transform organizational processes. A new digital directorate was established, with a Chief Digital Officer (CDO) on the executive leadership team for the first time. With the subsequent development of a new digital strategy, new teams and roles were created, as well as new ways of working.

Simultaneously, other strategic priorities were set across the organization, including the renewal, over five years, of all the fixed exhibitions for natural sciences, art, Maori and Pacific cultures, and New Zealand history.

At the same time as the new digital directorate was being established, a project was already underway to replace Te Papa’s main website. It was the first significant digital project at Te Papa to use agile project methodologies with a true user-centered approach to design.

As part of the new directorate’s establishment, we reviewed how Te Papa had developed digital products up to that point. As with many museums, there was a mix of approaches. Aside from the website redevelopment that was already underway, most digital projects were developed with:

- waterfall or ad-hoc project management

- solution, business, or technology driven approaches

- little, if any, user or visitor testing

- limited use of analytics for ongoing improvement

- limited planning for post launch maintenance or activity

- limited cross-organizational view of digital development and success

As is the case in many organizations, there had tended to be a focus on project development, rather than product development.

However, there were of a number of great ideas, a desire to do the best possible for our visitors, and talented, passionate staff. There was a significant opportunity to consolidate those strengths and create a framework that would focus on making the best possible products for our audiences.

The day a project is delivered, a product is born: the development of the DPDF Framework

In late 2015, CDO Melissa Firth contracted the Product Space (http://www.theproductspace.com/) to work with Te Papa’s digital team to co-design a workflow that would allow for user-focussed product development and management, making sure Te Papa was creating the right digital products for the right audiences in a sustainable and efficient manner. Key areas of focus for the framework were user problem identification and validation, audience-insights, user testing, rapid prototyping, iterative development, and continuous improvement. Pulling together a number of tools and processes from Design Thinking and Lean and Agile, the Product Space drafted up what would become Te Papa’s Digital Product Development Framework, or DPDF.

The framework wasn’t going to operate in isolation of the rest of the organization: in fact, it was vital it was seen as a core integrated approach with the rest of the museum. Additionally, as an autonomous Crown Entity approximately 50% funded by the government, there are a number of government statutes and policies we needed to adhere to. This meant we needed to validate any proposed new processes against existing (and often quite weighty) financial, governance, risk management, procurement, and reporting processes and policies across the organization. Lots of consultation, and lots of iteration to embed findings from each consultation.

During this iterative process, we took on a light and transparent approach, using a hand-drawn and constantly updated diagram outlining major steps of the framework, rather than heavy documentation. The diagram was pinned to the wall to be as visible as possible, and to be available to be discussed at a moment’s notice. Successful working practices from the current website redevelopment team were tested and integrated into the DPDF, and the website redevelopment immediately benefited from the framework; for example, we added a High Care period post launch—a concept not originally planned for the website development.

At the end of this period we had what we called the Digital Product Development Framework (beta). We could have continued to refine the framework until we felt it was “finished,” but of course we had to start using it with real teams and products in order to learn and iterate.

The DPDF steps, described

The DPDF lays out a set of phased steps that all products must go through:

- Strategic alignment

- Problem validation

- Approval to proceed, Product Portfolio Kanban

- Solution validation

- Develop and launch

- High care

- Sustain and grow

Strategic alignment

A self-assessment form to check that the idea matches current Te Papa organizational and departmental strategies. A very simple but basic check; is this something we should even be thinking about?

Problem validation

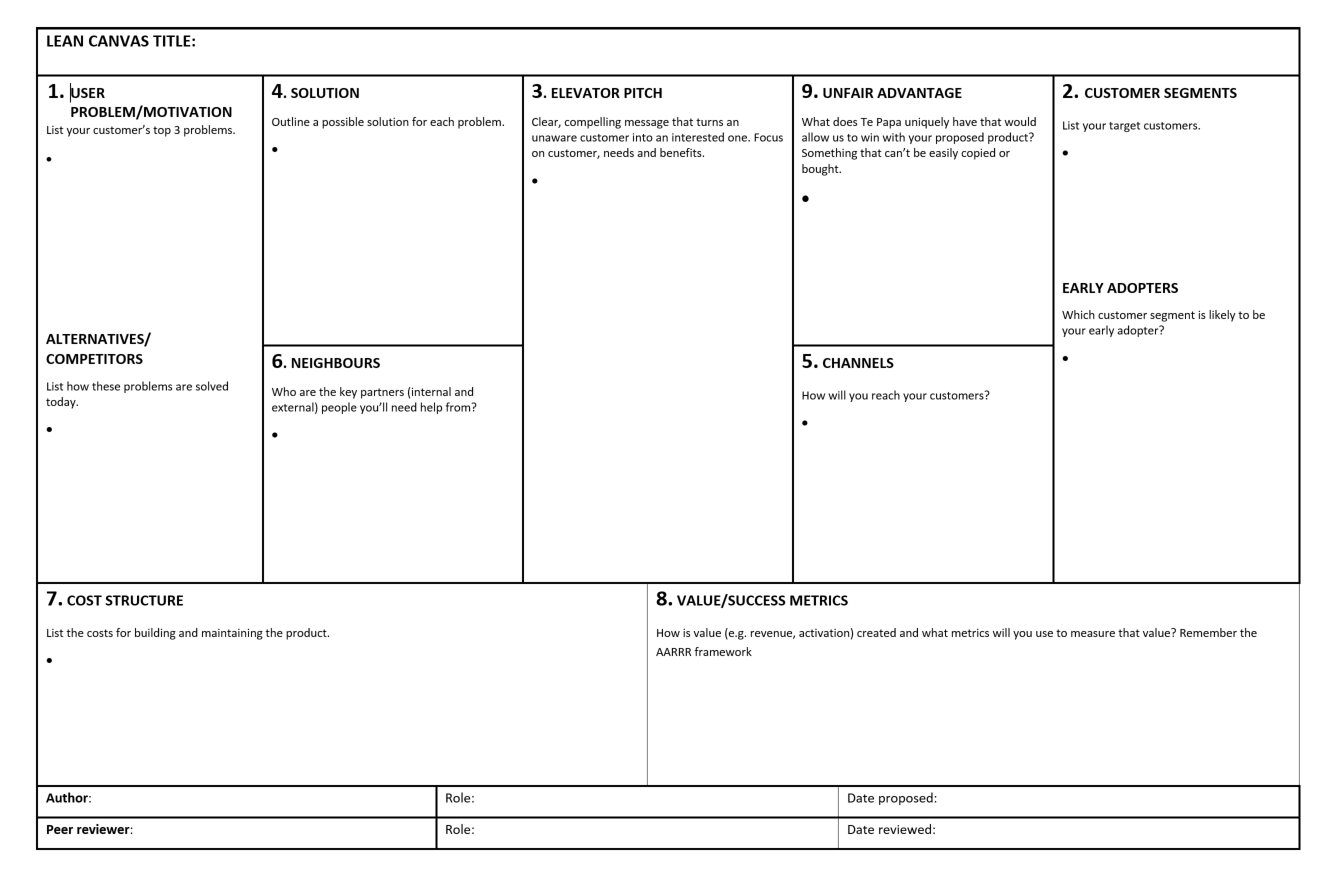

This step aims to ensure the proposed product has an identifiable audience need and a fit with audiences. It is important these questions are answered quickly and early before any significant expenditure of organizational resources or time. There are two mandatory tools for this step: The Te Papa Lean Canvas and identified target audience segments, as well as a few optional tools that may help validate the problem/solution fit.

At the heart of the Problem Validation step is the Lean Canvas.

Te Papa’s Lean Canvas is a slightly modified version of the Lean Business Model Canvas (http://leanstack.com). The modifications are minor, mainly acknowledging that most digital products made by museums are not based on financial return; therefor, forms of value other than revenue are used as a metric of success. Commercial opportunities are still identified where relevant. The Canvas structure means the core aspects of a business model (there is always a business model, even when it is not a commercial initiative) are covered, tested against each other, and can be validated through rapid testing.

The canvas is usually developed by a small group: the product owner, possibly an agile/lean coach, subject experts, and a UX team member. Using a Business Model Canvas to develop, build support for, and test ideas is much faster and more collaborative than creating a traditional, multi-page business case. Its brevity focusses the initiatives owners’ on answering the most important product questions, and also means that it actually gets read by stakeholders and neighbors whose input in turn strengthens the proposition.

The Canvas is targeted squarely at audience needs over business needs, quickly identifying solution bias, ensuring we do not create business solutions without a problem to solve or without an audience. Assuming the problem/solution fit is validated, the Canvas also becomes a living dashboard or constant checkpoint throughout the subsequent product development process.

1.

1a. User problem

Identify the three problems (in relation to the proposed product) the potential user has. You should be able to say what evidence you have that these problem exist. (If you can’t, that’s your first round of validation activity). A useful way to think about user problems in relation to museum digital experiences is through the Jobs To Be Done framework. What job does this product do for a key audience member in its intended context?

1b. Alternatives/competitors

How are the users solving these problems now? Who or what would this proposed product be competitive with? This includes a scan of both internal and external alternatives—digital and analog.

2.

2a. Customer segments

The target or likely audiences for the product.

2b. Early adopter

Defining the more specific attributes—behaviors of audiences that are likely to be the first or most important group of people we could reach and engage successfully with the product.

3.

Elevator pitch

Clear, simple, compelling message that turns an unaware customer into an interested one. Sometimes combined with a “Commanders Intent” or short strapline that sells the magic of the idea.

4.

Solution

The proposed solution for each identified user problem. This is highly unlikely to define a complete final product, rather it should be a high-level description of approach and highest value features, which can be tested and validated.

5.

Channels

How will you reach your audience? This could include in-gallery (e.g. signage, Wifi login screen, Host recommendations) or online channels (social media, website promotion, SEO, advertising, target audience platforms).

6.

Neighbors

Who will you need, inside and outside the museum, to help you create a successful product? Have you talked with them about the possible product?

7.

Costs

List the approximate costs for building and maintaining the product. Obviously without knowing exactly what the final product might be, you can’t have a completely accurate budget breakdown; but you should be able to identify the main areas of cost (for example, estimated hardware costs, development, hosting, testing, marketing, etc.), and have a range of costs. These costs must include the full lifespan of the product, including ongoing staff resources needed; i.e. ensuring there are people available to manage, maintain, and improve the product, look after any community using the product, or any other need to ensure the product is successful beyond launch. This sounds obvious, but it is often overlooked. This costing step is generally done as a basic financial model in a separate spreadsheet, with the key figures rolled up into the Canvas.

8.

Value/success metrics

How is value return created, and how will it be measured? We use Dave McClure’s Pirate metrics (McClure, 2007) AARRR; Acquisition, Activation, Retention, Revenue (Return), Referral as a framework to help identify success metrics.

9.

Unfair advantage

What does Te Papa uniquely have that would allow us to “win” with the proposed product? In the private sector, this is something that can’t be easily copied or bought by a competitor. In the museum context, it’s about being mindful of what we can bring to the product from Te Papa’s existing set of services, channels, and experiences that will maximize its value to audiences.

A key component of the canvas, as with any good product development tool, is a focus on the user, the person with a need, someone (other than ourselves) that we are investing our time and money building the product for.

Audience segmentation

Ahead of its museum renewal program, Te Papa recently adopted the Morris Hargreaves McIntyre (MHM) Culture Segments (Morris Hargreaves McIntyre, 2013) to help ensure we are building our exhibition experiences in a way that will meet Te Papa’s defined audiences’ expectations and psychographic motivations.

However, the MHM model currently has limited data around how visitors engage specifically with digital experiences on-floor (though many of the overall motivational data still apply). There is also limited data on how visitors are motivated on-line in their daily “non-cultural” lives—a digital space we would like to reach them with our online products and social media.

To ensure Digital’s approach (lean) was aligned with the wider museum’s approach to audience engagement through the Culture Segments, the solution was to make MHM the overarching segmental tool to be used for all digital products in the “Customer Segments” section of the lean canvas. Then in the next step, when identifying the “Early Adopter” for the proposed product, the Canvas goes into more detail describing the specific attributes of the potential audience segment that will be the group that will make the product “win.” Those who will not only love the product for the way it meets their needs/motivations, but also potentially promote the product to wider audiences.

There are a number of Lean and Design Thinking tools that can help ensure a product idea is on the right path. Product Owners need to test their assumptions, check their ideas against actual user needs, and preferably, talk to real people to get real world information. The Problem Validation phase is an important stage to get evidence that the proposed product does indeed have an audience. One tool that is optional is the Customer Problem Interview. This is a structured approach to gathering data from actual people, but also an opportunity to get off paper and into the field. As UX practitioners know, the importance of getting out of back of house and actually talking to key users in their context cannot be overstated.

The canvas does not define a final, locked-down definition of a product, rather an articulation of the product team’s assumptions and hypotheses about the product; an articulation that can then be tested and validated (or invalidated) early in the process. A canvas is never really “finished;” as a living document, it changes and adapts as you learn through testing and building. However, once a Product Owner is comfortable that the assumptions are an accurate picture of reality, it is ready to move forward to the next stage, Approval.

Submit for approval

All product proposals must be submitted to the Digital Steering Committee, comprised of a small group of people from Digital, Technology, and key business owners. The proposal package comprises a Strategic Alignment check, completed Lean Canvas with clear MHM alignment, and any supporting documentation, such as results from Customer Problem or Solution Interviews, audience research and market scans, or wider exhibition concept development documents. The product should also be roughly sized (S, M, L) based not just on potential costs, but other factors such as internal resources required, potential audience impact and reach, length of development time, and lifespan of product. The approval stage is a pragmatic requirement to ensure the best products get through for further development, and also to manage workload and available resources. It is designed not to be over bureaucratic, but to continue the light, lean, and agile touch of the rest of the DPDF.

Product Portfolio Kanban

Approved product proposals enter the Product Portfolio Kanban to allow for tracking and management of the overall resources and amount of work underway at any particular point. Product Managers meet weekly to update the Product team so the overall team knows exactly what is happening, and can look for bottlenecks or other problems. Also, the rest of the organization can see at any time how much work is in progress and due for release. Once the product enters the Portfolio Kanban, the product team has an inception workshop—establishing the team, reviewing the Canvas, and running through a small number of exercises to get the whole team on the same page regarding the problem, audience, and path to solution.

Solution validation

For estimated “Small” products, the product team will move straight to step 5—develop and launch.

For Medium and Large products, 10% of the proposed budget is released to the product team to more comprehensively prove the problem and validate solutions. The methods used here include extensions of those used in Problem Validation as well as other agile, design thinking, and lean tools. At the end of the agreed number of sprints, or set timeframe, the outputs are assessed to help determine next steps, changes/modifications, or whether to stop. If required, we may repeat this step before unlocking further funds. During this phase the Product Team’s output will vary depending on the size of the product and may include prototypes or proofs of concept, a backlog for a Minimum Viable Product (MVP), or even a business case.

Develop and launch

Working with an external vendor, or working entirely in-house, build the product! Rather than the previous waterfall approach of attempting to decide what the delivered product looks like months before launch, we use agile methodologies for planning and development. Regular sprint (usually two weeks) planning and backlog prioritization ensures the aspects of the product that deliver the most value to our visitors are delivered first. Using regular and continuous learning as part of the development process keeps the product, budget, and team health at optimal levels. Regular reporting at the end of each sprint and the Portfolio Kanban process keeps the progress and product transparent.

High care

Product launch is the beginning, not the end. Depending on the size of the product, a fixed time (for example three months for the development of the main organizational website) is allocated post-launch for most of the team to stay together, with an allocated budget, to manage the post-launch activities of the product. There are three main areas of focus for high care: (1) to monitor and review metrics, user feedback, and make changes as required; (2) to prioritize and address backlog items not prioritized for launch but that would still provide value; and (3) to embed business as usual (BAU) or continuous improvement (CI) processes for the product.

Sustain and grow

All products need ongoing budget, Product Ownership, monitoring of user metrics and stability, and a plan for retirement when appropriate. Online products may need community management, social media monitoring, and promotion. The DPDF helps with this by identifying resources (human and financial) early in the product development: a requirement of the approval process.

DPDF beta introduction

Once the DPDF beta had been developed and tested against the organization’s risk management, financial, and project management frameworks, the framework was introduced to the wider organization. Introductory presentations were run over a number of weeks, beginning with the digital and IT teams, the executive leadership team, the finance team, and then to wider project management teams, curatorial, exhibition, and development teams, and human resources.

Lean Canvas workshops were run with the digital team and other interested parties. This had two benefits. Firstly, it was a key way of embedding new ways of thinking, particularly focussing on user needs rather than organizational needs. Secondly, it gave us a number of potential museum test cases and helped us modify the canvas to better meet the museum sector.

Initial reception to the framework was cautiously positive, however, it was still very early in the days of the new digital directorate, which was still in the process of building the team in size and capability. Learning by doing, there were a number of new tools and ways of working that were foreign to existing staff; once we actually started using the DPDF for new product development, we learned a considerable amount about introducing such a new way of working. Bumps in the road are a normal, expected part of an iterative process, and the ultimate benefit of learning by doing and adjusting to real information is that we will end up with a process that really works for us.

When the rubber hits the road

Digital team still forming

With the digital directorate still being established, not only were we developing new processes to work with, we were still in the process of getting approvals for funding new roles. We were in a slightly chicken and egg situation, where we were trying to develop a process for which we had no people. We were introducing Product Management but had no Product Managers. It was difficult for staff from the broader organization who would be working with the digital team to imagine the process in more practical terms. This could only resolve with time: time to begin recruiting while in the meantime demonstrating the process with smaller products we could develop with the small team we already had.

Wider organizational change

As mentioned earlier, the organization was undergoing significant changes aside from a new focus on digital: a major capital program, and the first full exhibition renewal program in nearly 20 years (a process that is expected to take a total of five years). It was an exciting opportunity for everyone involved, but it meant there were other organizational processes that were changing, or being modified, and new departments and roles being established. Also during this period, some key staff positions changed.

When the DPDF was first designed, an approach for organization-wide business planning, budgeting, and approvals was in place. This set the context for the framework to work in, particularly the spaces of DPDF steps of Problem Validation, Approval, and Solution Validation. As the Renewal program planning progressed, these other frameworks and approvals changed.

This meant we needed to make sure the DPDF kept in step with changes across the organization. We spent more time than we would have liked adapting to change and discussing new forms of reporting and budgeting; but through much negotiation, the core of the DPDF has stayed the same.

Alignment with exhibition renewal processes

While the DPDF is straightforward for stand-alone products or channel development, such as websites, we have had to do a little more work to ensure this method of delivery also works in the context of waterfall delivered exhibitions, where digital experiences are part of a wider concept. Obviously, it is vitally important that the framework for developing digital components of exhibitions aligns with the wider exhibition development process. With the DPDF emphasizing problem validation and prototyping as early as possible, rather than looking for solutions too early and ensuring enough time for later iterative development, there was a misalignment of timing between the two processes. This was particularly evident in concept development and cost estimation phases. Finding exactly the right time to be using the tools of the DPDF, such as the lean canvas, has also proved challenging, and will no doubt vary from product to product and between exhibitions. Flexibility, transparency, and collaboration will be the ongoing solution for aligning key milestones on the two parallel processes.

“But we’re not a startup”

“But we’re not a startup” is a comment heard in a number of the DPDF workshops with staff: and it’s true. We’re not Spotify or Google, or any of the successful local digital companies in Wellington that have created successful products or businesses using similar processes and tools. We are a museum that has large online and physical audiences, a need to use our resources wisely to meet the greatest audience benefit and expectation, and a need to transform our processes to deliver value as efficiently as possible to the highest quality possible, minimizing waste along the way.

The “problem” problem

Possibly the most visible component of the DPDF process and toolset at the outset is the Lean Canvas, used to validate whether there is actually a problem worth solving, an audience for a solution, and a need to be filled. We deliberately limited the changes we made to Ash Maurya’s Lean Business Model Canvas so as not to dilute the strength of the tool and to not go too easy on ourselves. Some of the questions are hard to answer, because often the ideas we have are based on assumptions and legitimately do not serve a purpose for our users. Loosening up the canvas because it’s too hard defeats the purpose of the tool. We want to genuinely prove there is a problem and a need before committing too many resources to a product that may ultimately have little audience value.

Most staff who have been through the Lean Canvas have struggled with the very first step, in three different ways. At first they relish the idea and fill in a problem from a business problem perspective, e.g. “we want to increase visitation to webpage x.” This is actually a very natural and useful step, as it very quickly highlights organizational or solution bias and gets it down on paper. Next is often the realization that it is actually very difficult to come up with a user problem for some product ideas, because there simply isn’t a need, usually because the person has started with a solution. Again, this is a valuable discovery as early as possible. Lastly, there is a reluctance to use the word “problem.” There is a perception that as a museum, our visitors do not have “problems,” they only have “interests,” “motivations,” or “opportunities.” But once we start using examples such as “I like art, but I feel intimidated by the gallery,” or, “I know you have great images of your collections, but I don’t know where to start,” or, “I like to use the museum’s interactives and videos, but the controls are all different and I’m confused,”or, “I want my kids to learn about climate change, but the science is hard to communicate without scaring them,” there is usually a lightbulb moment.

Using “problem” forces us to consider the product from the visitor’s point of view, rather than from the solution view of the product team. Using “opportunity” would allow us to start with business or solution assumptions and then allow them to continue. A “problem” is much easier to validate one way or the other with visitors (do you have a problem with x? Yes or no?) as opposed to an “opportunity” (do you think you might be interested in this?). Opportunities tend to favor the organization, rather than the visitor.

We ended up with a compromise for labels as a result of the organizational discussions around the important components of the Canvas. We moved to “User Problem/Motivation,” and as we go through the process with more products in development, we continue to monitor how we look at our users and their needs.

Scale and overview

When the DPDF was originally designed, products would enter the Digital Product Portfolio KanBan once they were approved for development. We quickly learned this gave us very little lead-in time for managing resources and timelines; we could be hit with a number of large products all at one time. Also, it obscured the useful work people were doing in the Problem Validation stage. All possible products now enter the Portfolio KanBan much earlier, from the Problem Validation stage, or possibly as early as in the Idea stage. This change gives a much clearer picture for the product team to see what is coming, not just what is already in play. This became particularly important as more and more potential products entered the stream as part of the increasing pace of the exhibition renewal program.

Test, learn, and iterate

In addition to the more specific areas of change identified above, we are learning all the time as we continue to use the DPDF. New tools enter the framework as we look for alternatives to elements that we find don’t work so well, or elements that come along with the expertise of new digital staff now that the team is growing to full complement. Examples include successfully trialling the Google Ventures Design Sprint process (Google Ventures, 2016) to enhance rapid solution validation and prototyping, and introducing Clayton Christiansen’s “Jobs to be Done” (Christiansen et. al, 2016) methodology to help us identify our customer needs, helping with our “problem” problem. We will always look for ways to improve the way we work, because we are not the only ones working in this way.

A focus on communication and transparency has also meant we’ve learned a lot about how we talk within our teams and to the wider organization. A major focus of the deployment of the framework (and the setup of the Digital team in general) was ensuring that the wider organization continued to see Digital as an enabler to all aspects of the business, integral to how they engage with their audiences, rather than another “vertical” of the museum. One of the best ways to do this was to make sure everyone could see what we were working on. The portfolio KanBan was a significant part of this, as it had the potential to provide an overview of all digital products in development, as well as their progress. As such, it has gone through three major (and many minor) revisions to date.

The first version was designed for the Digital Product and Operations Team, but we realized that version didn’t meet our communication goals. The second made it much more visible (including moving the physical location to be closer to other departments), but it didn’t use languages that other processes used. The most current version tries to show where individual products are in the DPDF, and also how that relates to exhibition development progress.

Conclusion

Overall, the Digital Product Development Framework has been a step in the right direction, adding rigor to digital product management at Te Papa. It has added focus on our audience’s needs throughout their engagement with Te Papa, and has helped us manage the resources we have to focus on the most important, value-delivering products first.

The organization was enthusiastic overall, but there have been some bumps along the road, particularly when trying to introduce completely new processes and ways of thinking into an organization that is historically successful and already undergoing significant change. Communication, genuine consultation, and a willingness to compromise and adapt have been crucial to rolling out the DPDF.

We’ve learned a lot since using the tools on real world products, and there is more to learn. We have made significant improvements and seen some “firsts” in the few months the DPDF has been running. Most importantly we’ve tried to make sure the process is useful, and that the process is followed (but not to the detriment of delivery, i.e. we need still need to produce, not just tick process boxes). Just like the digital products the framework is there to support, the DPDF needs to test, learn, and adapt, and continue improvement.

References

The Product Space. (2016). Consulted February 12, 2017. Available http://www.theproductspace.com/

Maurya, A. (2016). Leanstack Lean Canvas. Consulted February 12, 2017. Available http://leanstack.com/lean-canvas

Morris Hargreaves McIntyre. (2013). Culture Segments. Last updated July 25, 2013. Consulted February 2017. Available http://mhminsight.com/articles/culture-segments-1179

McClure, D. (2017). Startup Metrics for Pirates, AARRR! Last updated August 8, 2007. Consulted February 12, 2017. Available http://www.slideshare.net/dmc500hats/startup-metrics-for-pirates-long-version/2-Customer_Lifecycle_5_Steps_to

Google Ventures. (2016). The Design Sprint. Consulted February 12, 2017. Available http://www.gv.com/sprint/

Christiansen, C. M., T. Hall, D. Kay, & D. Duncan. (2016). “Know your jobs to be done.” Harvard Business Review. Consulted February 12, 2017. Available https://hbr.org/2016/09/know-your-customers-jobs-to-be-done

Cite as:

Kingston, Adrian. "Audience-centered product development: establishing a digital product development framework at Te Papa." MW17: MW 2017. Published January 31, 2017. Consulted .

https://mw17.mwconf.org/paper/audience-centred-product-development-establishing-a-digital-product-development-framework-at-te-papa/