DIY Zooniverse citizen science project: engaging the public with your museum’s collections and Data

Laura Trouille, The Adler Planetarium, USA, Chris Lintott, University of Oxford, UK, Grant Miller, University of Oxford / Zooniverse, UK, Helen Spiers, University of Oxford, UK

Abstract

Museums are increasingly using citizen science as a tool to engage their visitors, create metadata for digitized materials in their collections, and assist in their research efforts. Zooniverse, the leading online citizen science platform with 1.5 million registered users and over 45 active projects, recently released its DIY Project Builder which enables anyone to create their own Zooniverse project for free. Through the user-friendly, browser-based interface, project builders select among marking, annotation, and transcription tools, upload their content and data, and export the classification results. In this session, we will present the Project Builder and how museum staff and researchers are using it to engage their visitors and volunteers around the world in their research and collections.Keywords: citizen science, research, crowd sourcing, science, outreach

Introduction

The Zooniverse, born out of the hugely successful Galaxy Zoo project (Lintott et al., 2008), has grown over the last decade to become the world’s largest and most popular online citizen science platform. It is currently home to over 50 active projects across disciplines, including astronomy, the humanities, ecology, and biomedical sciences (www.zooniverse.org).

The recent accelerated growth of the Zooniverse platform is due to the development of a new infrastructure, which most importantly includes the Project Builder interface (www.zooniverse.org/lab), allowing anyone to build their own Zooniverse project for free without technical expertise. The development of the new platform was driven by the fact that the relatively small Zooniverse Web development team could only build a handful of the over one hundred projects proposed each year.

Development of the Project Builder began in early 2014, funded by a Google Global Impact Award (https://www.google.org/global-giving). The initial test of the new platform was the Snapshot Supernova project, launched live on British television during BBC Stargazing Live in March 2015, and the Project Builder was officially unveiled in July 2015.

Functionality

The Project Builder interface allows users to quickly and easily upload images (subject sets) and set the tasks they require volunteers to perform (the workflow). Images can be uploaded in .jpg, .png, .gif, or .svg format. Each image has a maximum size of 600KB (this limit is imposed so that browser load times on typical Wi-Fi connections are reasonable) and each user has an initial upload limit of 10,000 subjects. When uploading subjects the user can add a manifest, which is a simple CSV file used to list the subject filenames and associate any desired metadata. The manifest can also be used to combine two or more images as one subject.

The project owner sets the tasks they want the volunteers to perform. Initially the Project Builder only supported two task types, question and drawing. The question task allows the project owner to set a series of questions for volunteers to answer based on the subject they are viewing. Project owners can control what happens next in the workflow based on the answer to each question. The drawing task allows the project owner to choose from a range of marking tools that can be used by volunteers to annotate or highlight specific regions of an image. More recently two new task types have been added—survey and text. The survey task allows project owners to set a large grid of possible options of things to identify in each subject, such as a species list for a camera trap project. The text task provides a tool for free text entry. New task types are initially added as admin-only features that the Zooniverse team can test on individual projects before opening them up to all project builders.

The Project Builder interface also allows project owners to do the following tasks: set up their project landing page; add others as collaborators; add pages outlining their research and introducing their team; create tutorials and field guides; add help text to assist volunteers with any given task; set-up the Talk discussion area for their project; set the retirement limit for their subjects; and finally, download data exports of all classifications made by their volunteers.

Review process

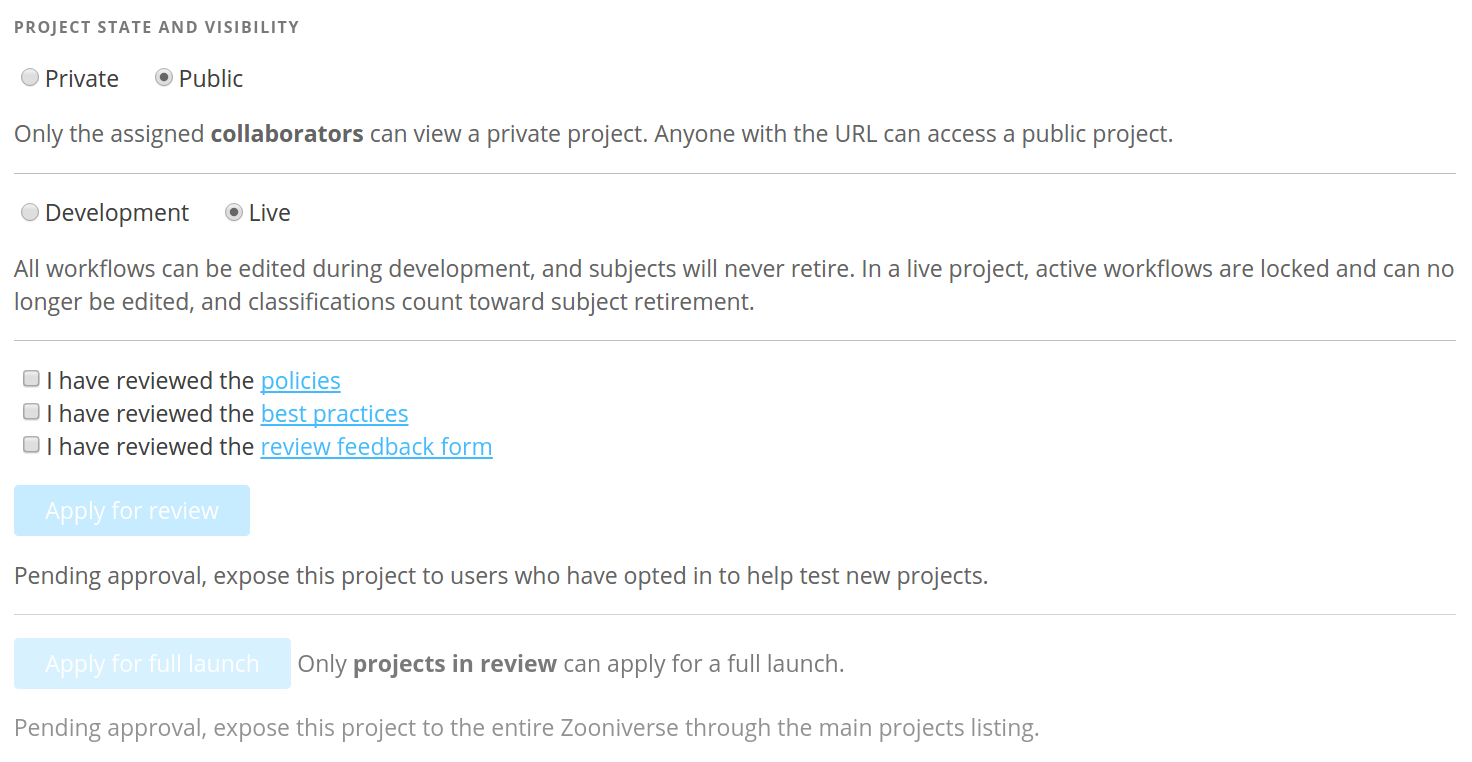

Project owners can control the visibility of their project via the Project Builder interface (figure 1). If a project is set to “Private,” only people who have been added as collaborators can view it. If the project is set to “Public” anyone with the URL can access it. If the project is switched from “Development” to “Live” the active workflows will be locked so no changes can be made, and the subjects will retire as they reach the retirement limit.

Project owners who want to make their projects official Zooniverse projects listed on www.zooniverse.org apply to our review process. They do this in the Project Builder interface by clicking the “Apply for review” button after setting the visibility of their project to “Public” and the state to “Live.”

When a project is submitted for review, the internal Zooniverse team has a quick look to see whether it appears to be legitimate research, with an appropriate subject set (i.e. the researchers have ownership/permission for their images) and workflow. The team also reviews whether the subject set is large enough to require the help of Zooniverse volunteers, and that automated processes are not a viable alternative. Once these initial criteria are passed, the project is then sent to the review community. This is a cohort of over 40,000 Zooniverse volunteers who have agreed to help test and review new projects. They are given a brief description of the project goals along with a link to the project and to a Google form that they can use to provide feedback. The feedback form asks a range of questions about the project, such as how easy the task is to perform, and whether the help text is useful. At the end of the form there is a yes/no question about the suitability of the project for the Zooniverse platform. Typically each project receives feedback from around 100 members of the review community. The review community is not provided with any formal criteria for judging suitability. We find that the majority of projects that make it to this stage receive a yes vote of over 90% from the community; anything under that number signifies to the Zooniverse team that their may be major issues with the project that need to be addressed before a potential launch. Only one of the 49 projects submitted for review to date has been rejected for launch due to the review community deeming it unsuitable. However, that project was still able to gather classifications by sharing and promoting it themselves.

Project builder statistics

In the 18 months since the Zooniverse Project Builder was launched, over 500 people have attempted building their own project. Of these attempts, 306 have functioning workflows, and 132 have gathered more than 100 classifications.

A total of 49 projects have entered the review process, 23 of which are now fully launched on the Zooniverse platform. As a comparison, it took the Zooniverse team three years to build and launch a similar number of projects before the development of the Project Builder (figure 2). Notably, we have found that as each new project is launched, our registered volunteer base increases (figure 3).

Many of these newer projects have much smaller datasets than the older established projects like Galaxy Zoo. This means that the total number of classifications needed are much fewer, and the project timescales are much shorter. For example, the first iterations of Understanding Animal Faces and Snow Spotter both ran through their data in under three months, and the Computer Vision: Serengeti project was completely retired after all of its data was completed in under half a year. The Project Builder has also given rise to projects that cycle through multiple small datasets. One such example is Supernova Hunters, where a few thousand subjects are uploaded every week, and the volunteers complete them within a day or two.

Three of the launched Project Builder projects have already produced scientific publications (Campbell et al., 2015; Kosmala et al., 2016; Zevin et al., in press, Bahaadini et al., 2016, Crowston et al., 2017). (See https://www.zooniverse.org/about/publications for a list of all Zooniverse-based publications).

Future developments

The Zooniverse development team continues to add functionality to the Project Builder. Since its launch, more task types have been added, along with new drawing tools—and these options will continue to grow. The team is also committed to providing the classification results in more accessible formats, allowing even more people to benefit from this open tool. Additionally, there is ongoing work to allow upload of a wider range of subject types. Upload of video subjects is now possible as an admin option, and has been utilized in the Arizona BatWatch project. Upload of audio files and multidimensional data is planned to follow soon.

As the Project Builder allows the number of projects hosted on the Zooniverse platform to grow at a much faster rate than ever before, management of project life cycles and their promotion will become increasingly important. In particular, this will ensure effort is being efficiently directed to deserving projects. To guarantee this, it is important that the Zooniverse volunteer community is given more control over the three main phases of a project’s lifecycle:

(1) Launch

Some volunteers already contribute to this stage as part of our review community; however, the final decision on whether a project should launch on the platform is made by the Zooniverse team. Giving volunteers greater control over whether a project should launch may help alleviate the bottleneck caused by an increasing number of projects requesting launch. These responsibilities should also be opened up to all members of the Zooniverse community, perhaps by creating a new section of the site where volunteers can view and assess all projects under review.

(2) Promotion

As the number of projects launched on the Zooniverse increases, project promotion will become ever more important. Projects can be promoted in various ways, including being placed at the top of the projects list on the main Zooniverse page, or being featured in a newsletter or blog post. The conduct of project owners should be considered when determining whether a project deserves promotion. Do they proactively engage with their volunteers? Are they analyzing and interpreting the data produced by their project? The community should be allowed to assess and rate projects based on these criteria.

(3) Retirement

Zooniverse automatically retires a project when the dataset is complete. However, some projects require shutting down before this goal is met, especially if it appears volunteers’ time is being wasted. In the event that a science team is not engaging with their community and not producing any results, it is important that the Zooniverse community has the power to propose that the project be removed from the active list.

Concluding remarks

Zooniverse has proven to be a transformative tool for research and engaging the public in real science. Since launching Galaxy Zoo in 2007, Zooniverse has supported over 70 projects with hundreds of researchers engaged with over 1.5 million registered volunteers around the world. Since its launch in July 2015, the Project Builder has enabled an ever growing number of research teams to launch their own people-powered research projects and fostered an ever-growing community of online citizen- scientists. The major challenges and opportunities that lie ahead are (1) further empowering the Zooniverse volunteer community to play a critical role in the launch, promotion, and retirement of projects; (2) continuing to increase the Zooniverse volunteer base as new projects join; and (3) publicizing the Project Builder to universities, galleries, museums, libraries, archives, etc., so that a wide community of researchers know about this free, easy-to-use tool for crowd-sourced research.

Acknowledgements

The Zooniverse Project Builder was made possible by a Google Global Impact Award. The authors would also like to thank the entire Zooniverse team for their work making this a reality. We would like to extend special thanks to our Zooniverse volunteer review community, who have provided excellent feedback to ensure all projects launched are of the highest quality, and to all Zooniverse volunteers, without whom Zooniverse would not be possible.

References

Bahaadini, S., N. Rohani, S. Coughlin, M. Zevin, V. Kalogera, & A. Katsaggelos. (2017). “Deep Multi-view Models for Glitch Classification.” In 42nd IEEE International Conference on Acoustics, Speech, and Signal Processing: March 5th-9th 2017, New Orleans, USA.

Campbell, H., R. Cartier, M. Smith, J. Anderson, C. Inserra, C. Lintott, G. Miller, C. Allen, B Carstensen, G. Hines, A. McMaster, E. Paget, K Maguire, S. J. Smartt, K. W. Smith, M. Sullivan, S. Valenti, O. Yaron, D. Young, I. Manulis, D. Wright, K. Chambers, H. Flewelling, M. Huber, E. Magnier, J. Tonry, C. Waters, R.J. Wainscoat. (2015). “ATel #7254: PESSTO spectroscopic classification of optical transients.” In The Astronomer’s Telegram. Consulted December 1, 2016. Available http://www.astronomerstelegram.org/?read=7254

Crowston, K., C. Østerlund, T.K. Lee. (2017). “Blending Machine and Human Learning Processes.” In Advances in Teaching and Learning Minitrack, Hawaii International Conference on System Sciences: January 4th-7th 2017, Hawaii, USA. Consulted December 1, 2016. Available http://scholarspace.manoa.hawaii.edu/handle/10125/41159

Google Global Impact Awards. Consulted January 30th, 2017. Consulted December 1, 2016. Available https://www.google.org/global-giving/global-impact-awards/zooniverse

Kosmala, M., A. Crall, R. Cheng, K. Hufkens, S. Henderson, A.D. Richardson. (2016). “Season Spotter: Using Citizen Science to Validate and Scale Plant Phenology from Near-Surface Remote Sensing.” Remote Sens 8(9), 726.

Lintott, C., K. Schawinski, A. Slosar, K. Land, S. Bamford, D. Thomas, J. Raddick, R.C. Nichol, A. Szalay, D. Andreescu, P. Murray, L. van den Berg. (2008). “Galaxy Zoo: morphologies derived from visual inspection of galaxies from the Sloan Digital Sky Survey.” Monthly Notices of the Royal Astronomical Society 389(3), 1179-89.

Zevin, M., S. Coughlin, S. Bahaadini, E. Besler, N. Rohani, S. Allen, M. Cabero, K. Crowston, A. Katsaggelos, S. Larson, T. Lee, C. Lintott, T. Littenberg, A. Lundgren, C. Oesterlund, J. Smith, L. Trouille, V. Kalogera. (in press). “Gravity Spy: Integrating Advanced LIGO Detector Characterization, Machine Learning, and Citizen Science.” SAO/NASA ADS arXiv e-prints Abstract Service. Consulted December 1, 2016. Available http://adsabs.harvard.edu/abs/2016arXiv161104596Z

Cite as:

Trouille, Laura, Chris Lintott, Grant Miller and Helen Spiers. "DIY Zooniverse citizen science project: engaging the public with your museum’s collections and Data." MW17: MW 2017. Published January 30, 2017. Consulted .

https://mw17.mwconf.org/paper/diy-your-own-zooniverse-citizen-science-project-engaging-the-public-with-your-museums-collections-and-data/